Agent Identity and Credential Brokers: Access Without Oversharing Secrets

Agent Identity and Credential Brokers: Access Without Oversharing Secrets

The fastest way to turn a clever agent into a security incident is to hand it a long-lived API key and wish it well.

That pattern was shaky in 2024. In 2026 it is indefensible. Agents are not single API callers anymore. They browse, draft, approve, reconcile, sync, and hand work across systems. If you give that kind of software broad standing access, you have not built an automation layer. You have built a very articulate insider threat.

This guide covers the safer pattern: give agents identity, route access through a credential broker, and issue permissions that are narrow, time-bound, and auditable.

TL;DR

Do not hardwire secrets into agents. Give each agent or run a runtime identity, use a broker to mint short-lived credentials, scope access to the task at hand, and log every approval, token issuance, and sensitive action.

Why traditional secret handling breaks with agents

A normal backend service usually has a clear role. It serves one app, talks to a known set of systems, and executes deterministic code paths. Agents are different. Their behavior is conditional, tool-driven, and often semi-open-ended.

That makes broad credentials much more dangerous. A single secret might unlock:

- customer data

- billing changes

- code repositories

- internal messaging

- production dashboards

Once an agent can choose how to use those privileges, the blast radius expands dramatically. The old "just store it in the environment" habit no longer matches the operational reality.

Think in identities, not secrets

The right mental model is not "What key should the agent have?" It is "Who is this agent right now, and what is it allowed to do in this run?"

That shift matters. An identity-based design lets you answer:

- which agent acted

- on whose behalf it acted

- in what workflow it acted

- under which policy it acted

- for how long the permission was valid

Those are the questions auditors, security teams, and incident responders actually care about. "It used the integration key in Vault" is not much of an explanation when the finance agent has just tried to refund half your customers.

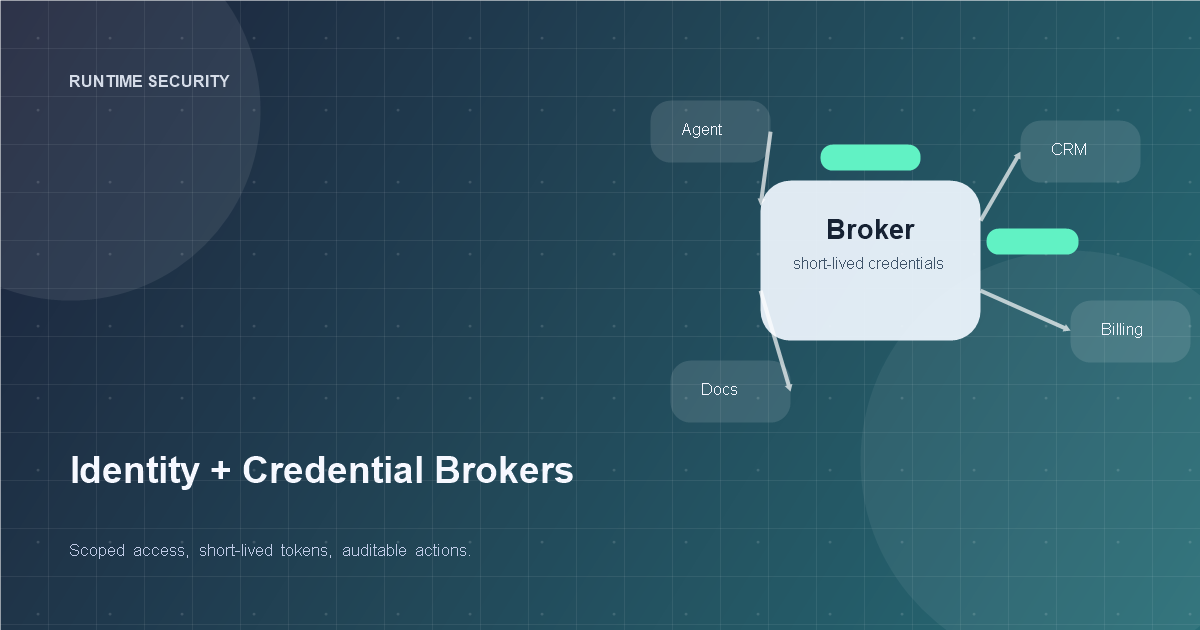

The credential broker pattern

A credential broker sits between the agent and the systems it wants to use. The agent does not receive permanent secrets. Instead, it requests a scoped credential for a specific action.

For example:

- the agent asks to read one CRM account

- the broker checks the policy

- the broker verifies whether human approval is required

- the broker mints a short-lived token

- the action is logged with task context

That token should be narrow in scope, short in duration, and useless outside the approved action window. If the run goes sideways, the permission expires quickly and the audit trail is intact.

This is the operational version of least privilege, adapted for systems that plan before they act.

Scope access to the job, not the agent brand

Many teams make the mistake of granting permissions to a generic agent role such as support-agent or ops-agent. That is too coarse. A better model scopes permissions to the actual job instance.

Useful dimensions include:

- agent type

- workspace or tenant

- user delegation context

- requested tool

- action type

- object or record target

- time window

In plain English, "support-agent can do CRM things" is weak policy. "This support triage run may read ticket 4812 and append an internal note for the next ten minutes" is much stronger.

It also makes approval systems cleaner, because the reviewer can see exactly what the agent is asking for instead of approving a vague aura of competence.

Use human delegation explicitly

Many enterprise agent workflows act on behalf of a human. That should be explicit. When an agent performs work for a manager, analyst, or support rep, the system should carry that delegation context throughout the run.

That means every sensitive action should answer:

- which human initiated or delegated the task

- which agent executed it

- whether the action was advisory or autonomous

- whether additional approval was required

This becomes especially important in regulated environments and internal audits. If your system cannot separate "the agent suggested this" from "the agent executed this under delegated authority," your control story is going to collapse under scrutiny.

For more on operational controls in regulated settings, see /posts/regulated-agent-playbook.

Short-lived credentials are the default, not the premium option

Short-lived tokens used to feel like extra engineering. With agents, they are table stakes.

Why they matter:

- stolen tokens expire quickly

- overbroad permissions are easier to constrain

- approvals can be bound to a time window

- retries do not silently inherit yesterday's access

Teams often resist this because brokered credentials sound complicated. In practice, the complexity is lower than cleaning up after a long-lived secret leaks into logs, traces, memory, or prompt history.

And yes, agents are very good at accidentally putting secrets in places you did not intend.

Separate read, write, and dangerous write paths

Not all writes are equal. Appending an internal note is not the same as changing tax settings or moving money. Your broker policy should reflect that.

A simple model works well:

- Read: usually auto-approved if scoped correctly

- Write: approved by policy and sometimes by human review

- Dangerous write: always requires step-up approval or dual control

This is much more operationally useful than a generic "write permission" flag. It maps to how humans already think about risk, which makes adoption easier and review queues saner.

If your current approval design is fuzzy, pair this pattern with /posts/human-handoff-playbook-for-ai-agents.

Keep secrets out of prompts, memory, and traces

Credential brokers solve only part of the problem. You also need hygiene rules around where sensitive material can travel.

Do not allow:

- raw secrets in prompt context

- tokens stored in long-term memory

- credentials embedded in debugging traces

- tool outputs that echo secret material back into the conversation

This requires redaction and structured tool wrappers, not just "please be careful" prompt text. Agents should operate on references, handles, and approved actions whenever possible, not on the raw secret itself.

That design also makes observability safer because you can replay the mission without accidentally replaying the crown jewels.

What a good audit trail looks like

A healthy audit record for brokered agent access should show:

- run ID

- agent identity

- human delegation context

- requested permission

- approval result

- credential lifetime

- target system

- action outcome

That gives operations, security, and compliance a common source of truth. It also makes post-incident review much faster because the answer is not buried inside a general-purpose application log.

If you are serious about operating agents in production, this data should sit alongside the rest of your runtime telemetry, not in a forgotten corner of a secrets platform.

A practical rollout path

You do not need to rebuild the entire stack in a week. A sensible sequence looks like this:

- Inventory which tools and systems each agent can access today.

- Replace the highest-risk long-lived secrets with brokered short-lived credentials.

- Add run-level identity and delegation context.

- Split actions into read, write, and dangerous write.

- Add approval and audit logging around sensitive flows.

This sequence cuts the biggest risks first and creates a path toward full policy-based access later.

Summary

Agents need access, but they do not need broad standing power. The durable pattern is runtime identity plus brokered, short-lived credentials with clear policy and audit trails. That design contains blast radius, improves reviewability, and fits the way modern agents actually behave.

If your agent can act, it needs an identity. If it has an identity, it should earn its permissions one narrow step at a time.

Related Tools

Useful tools for this topic

If you want to turn this article into a concrete next step, start with one of these.

Risk and Governance

OperationsIdentify where privacy, compliance, auditability, and action controls need to show up before rollout.

Open toolHuman-in-the-Loop Designer

OperationsDecide where approvals, review points, and escalation paths belong in the workflow.

Open toolData Readiness

OperationsEvaluate completeness, consistency, recency, access control, and ownership in the data behind the system.

Open toolSubscribe to AgentForge Hub

Get weekly insights, tutorials, and the latest AI agent developments delivered to your inbox.

No spam, ever. Unsubscribe at any time.