Shadow Mode for AI Agents: Test Real Workflows Before You Grant Real Power

Shadow Mode for AI Agents: Test Real Workflows Before You Grant Real Power

One of the strangest habits in software is asking a brand-new agent to make real decisions on day one. Nobody would hire a new operator, hand them production credentials, and say "Surprise us." Yet teams do exactly that with agentic systems all the time.

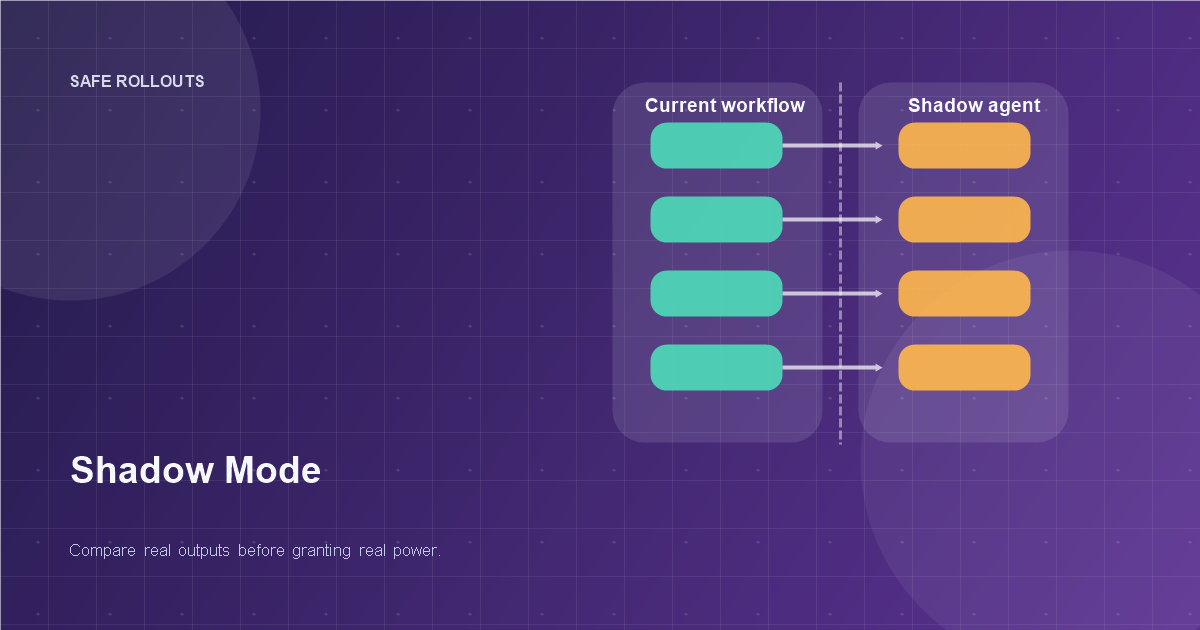

Shadow mode is the grown-up alternative. The agent sees real work, produces real outputs, and touches none of the live controls. You get evidence before exposure.

TL;DR

Run the agent beside the current workflow before letting it act. Compare output quality, timing, escalation behavior, and failure patterns against the live baseline. Launch only after you understand where the agent is better, worse, or weird.

What shadow mode actually is

In shadow mode, the agent processes the same inputs as the production workflow but does not execute the final action. A human, rule system, or existing automation remains the source of truth.

That sounds simple, but it changes everything. Instead of testing on toy prompts, you observe:

- messy real-world inputs

- timing issues

- missing context

- edge-case workflows

- human override patterns

In other words, you stop evaluating the agent in a lab and start evaluating it in the habitat where it will eventually hunt.

Why teams skip it and regret it

Teams usually skip shadow mode for three reasons:

- They are in a hurry

- They think offline evals are enough

- They underestimate integration weirdness

Offline evals are useful, but they are not a substitute for production-shaped reality. Real workflows contain broken fields, stale permissions, missing attachments, duplicate tickets, and users who write like they are being chased.

Shadow mode reveals operational truth. It is where "the model looked great in testing" goes to meet the actual customer backlog.

If you want the evaluation side of this in more depth, see /posts/evaluation-and-safety-of-agentic-systems.

The four questions shadow mode should answer

Do not run a shadow deployment just to feel responsible. Run it to answer clear questions:

- Does the agent choose the right action?

- Does it ask for human help at the right moments?

- Is it fast enough for the workflow?

- What failure modes appear only with real traffic?

These questions are much more useful than a vague success rate. They tell you whether the agent is safe to promote and where to tighten the system first.

Compare against the current workflow, not your hopes

A shadow run should always have a baseline. Usually that baseline is one of three things:

- a human operator

- an existing automation

- the current non-agent workflow

Then compare on dimensions that matter:

- action correctness

- time to decision

- escalation rate

- cost per handled task

- user-visible quality

This matters because a lot of agent launches are judged against imagination rather than reality. "It feels promising" is not a rollout metric. Side-by-side deltas are.

Capture disagreements as the main event

Agreement is comforting, but disagreement is where the value lives. Every time the shadow agent disagrees with the baseline, log it for review.

Useful disagreement buckets:

- agent was right, baseline was wrong

- baseline was right, agent was wrong

- both were acceptable, but different

- neither was acceptable

This helps avoid a common blind spot: assuming disagreement equals failure. Sometimes the agent is finding a faster or cleaner path. Sometimes it is inventing one. You need both categories separated, or the review queue turns into a fog machine.

Shadow mode needs observability, not vibes

If shadow runs are not traceable, you are just generating extra confusion at scale. For each run, capture:

- the input

- the context retrieved

- the proposed action

- the confidence or justification signal

- the baseline action

- the reviewer verdict

This is where operational telemetry becomes essential. The replay and audit habits from /posts/agent-observability-and-ops fit perfectly here.

The practical rule is blunt: if you cannot inspect a shadow disagreement in under two minutes, your rollout process is under-instrumented.

Start with read-only and low-consequence workflows

Not every workflow deserves the same launch sequence. Start where mistakes are visible but contained:

- draft replies

- ticket triage suggestions

- CRM enrichment proposals

- internal research summaries

Avoid beginning with high-consequence actions like account closure, approval decisions, or irreversible financial updates. Teams often do the opposite because those are the most exciting demos. Excitement is not a deployment strategy.

Shadow mode works best when it earns credibility in boring places first.

Add promotion gates before full launch

Shadow mode should end with a decision, not a shrug. Define promotion gates in advance:

- minimum agreement with the approved baseline

- maximum unsafe-action rate

- acceptable escalation behavior

- acceptable latency and cost

- no unresolved critical failure patterns

Without gates, shadow mode becomes an expensive ritual where everyone agrees the data is "interesting" and nobody knows what to do next.

This is also where small teams benefit from simplicity. A short scorecard is better than a perfect framework that never gets finished.

Use partial promotion, not a dramatic flip

Once the shadow phase looks good, do not jump straight to full autonomy. Promote in steps:

- Suggest only

- Human approves with one click

- Agent executes low-risk cases automatically

- Expand scope gradually

This mirrors how trust is built in real operations. The agent proves itself with increasingly real responsibility. The humans get time to adapt. The organization learns where its comfort boundary actually is.

That is especially important in 2026, because many teams are not just testing the model. They are testing whether the business is emotionally prepared to let the model act.

A short example: support triage done right

Imagine a support team wants an agent to categorize tickets and route them to the right queue. In shadow mode, the agent classifies every incoming ticket but does not alter routing. Reviewers compare the agent's choice to the human-assigned queue over two weeks.

The first lesson is not model quality. It is taxonomy confusion. Humans disagree with each other nearly as much as they disagree with the agent. That is a good outcome because shadow mode surfaced a process problem before launch. The second lesson is that the agent performs best on urgent billing cases and worst on product bugs with vague language. That gives the team a clear phased rollout: launch billing first, keep bugs under review.

That is the real power of shadow mode. It does not just test the agent. It clarifies the workflow.

The anti-patterns to avoid

Three mistakes show up constantly:

- running shadow mode without reviewers

- reviewing only aggregate metrics

- keeping the agent in shadow forever

Without reviewers, you have data but no judgment. With only aggregate metrics, you miss the failure shape. And if the agent never leaves shadow mode, you are not managing risk. You are avoiding decisions.

The goal is evidence-backed deployment, not permanent purgatory.

Summary

Shadow mode is the safest way to make an agent face reality before reality has to face the agent. Run it beside the current workflow, log disagreements carefully, evaluate promotion gates in advance, and move into autonomy in stages rather than all at once.

The best launch plan for an AI agent is not bravery. It is rehearsal.

Related Tools

Useful tools for this topic

If you want to turn this article into a concrete next step, start with one of these.

Evaluation Plan Builder

OperationsBuild a first evaluation plan for answer quality, action safety, human review, monitoring, and rollback.

Open toolSolution Type Quiz

PlanningDecide whether your use case is better served by automation, a chatbot, RAG, a copilot, or a more capable agent.

Open toolReadiness Scorecard

PlanningAssess whether the workflow, data, access, and risk controls are mature enough for a real pilot.

Open toolSubscribe to AgentForge Hub

Get weekly insights, tutorials, and the latest AI agent developments delivered to your inbox.

No spam, ever. Unsubscribe at any time.