The Small-Model, Large-Verifier Pattern for AI Agents

The Small-Model, Large-Verifier Pattern for AI Agents

One of the biggest 2026 shifts in agent design is psychological as much as technical: teams are finally giving up the idea that the biggest model should do every part of every job.

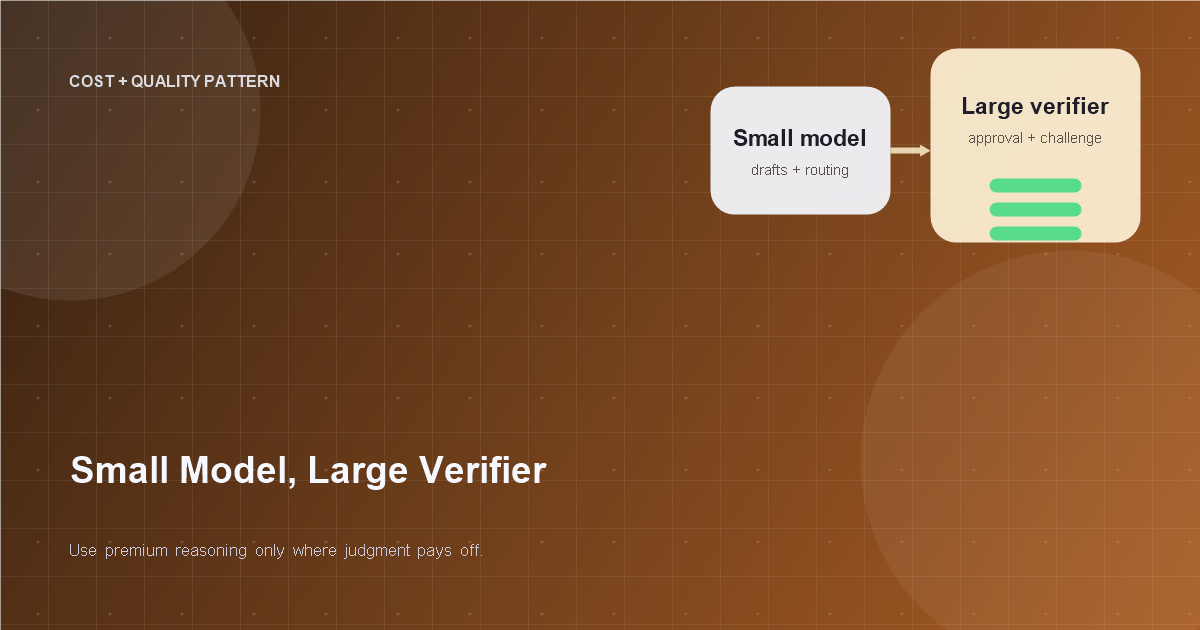

That realization leads to a very practical pattern. Let the smaller, cheaper model do most of the routine work. Bring in the stronger model selectively to verify, challenge, or approve the result when the stakes or uncertainty justify the extra spend.

Think of it less as "cheap model plus smart model" and more as "operator plus reviewer." It is one of the cleanest ways to improve both cost and quality at the same time.

TL;DR

Use smaller models for drafting, extraction, routing, and routine tool use. Use a stronger verifier for risky steps, ambiguous outputs, or final approval on sensitive actions. The value comes from selective review, not from doubling every call.

Why this pattern is winning now

The economics are obvious. Premium models are powerful, but using them for every low-risk step is like hiring senior counsel to label folders. At the same time, teams learned that smaller models can handle a surprising amount of structured work when the task shape is clear.

The trick is accepting that speed and cost savings alone are not enough. The system still needs a way to catch fragile reasoning, bad tool choices, or polished nonsense. That is where the verifier enters.

This pattern is showing up everywhere because it matches the maturity of the field. Teams no longer want just "the smartest answer." They want reliable throughput with defendable costs.

Separate execution from judgment

The core design move is to split two responsibilities:

- Execution model: does the work quickly

- Verifier model: checks whether the work should stand

That separation matters because generation and judgment are not the same task. A model that is good at producing fluent output is not automatically the best choice for evaluating whether a result is grounded, compliant, or complete.

This is the same lesson good organizations learned centuries ago. The person moving fast and the person approving the move do not always need to be the same person.

Where the small model should lead

Smaller models are often perfectly adequate for:

- summarization

- classification

- extraction

- simple routing

- first-pass drafting

- straightforward tool plans

These jobs benefit from speed, consistency, and lower cost. They also tend to have clearer evaluation signals, which makes them easier to verify later if needed.

The mistake is not using a small model. The mistake is using it without a clear envelope and pretending confidence is the same thing as correctness.

Where the large verifier earns its keep

Bring in the larger verifier when the output affects risk, trust, or irreversible action.

Good verification targets include:

- final decision memos

- compliance-sensitive outputs

- tool plans that trigger writes

- cases with low confidence or conflicting evidence

- outputs that deviate from expected patterns

Notice the pattern: the stronger model is not there to admire the smaller model's prose. It is there to challenge the result where mistakes are expensive.

Verification should be structured, not mystical

Do not ask the verifier vague questions like "Does this look good?" That is how you pay premium rates for elegant shrugging.

Ask for structured checks:

- Is the answer supported by the provided evidence?

- Did the plan violate any policy constraints?

- Are required fields missing?

- Should this output be approved, revised, or escalated?

Better yet, ask the verifier to return a decision and reason code. Structured verification produces cleaner dashboards, cleaner review flows, and cleaner prompts over time.

Trigger verification selectively

The entire pattern fails if every small-model step gets sent to the big model anyway. Then you have not built a smart architecture. You have built a more complicated invoice.

Common triggers for verification:

- high-risk job type

- confidence below threshold

- retrieved evidence is stale or conflicting

- output contains sensitive action requests

- anomaly detected in latency, tool usage, or format

Selective verification is the difference between a scalable pattern and a performative one.

For teams already managing spend aggressively, this goes hand in hand with /posts/agent-cost-control-for-small-teams.

The verifier can reject, revise, or route

A useful verifier is not limited to yes or no. It should have three practical options:

- Approve: output is good enough to continue

- Revise: send back targeted feedback or request a retry

- Escalate: route to a human or stronger workflow

That middle option is especially valuable. Many errors do not need a full restart. They need a narrower correction such as "missing citation," "insufficient evidence," or "tool plan exceeds allowed scope."

This turns the stack into a feedback loop instead of a single pass/fail gate.

Watch for correlated failure

One catch with this pattern is that both models can share the same blind spot, especially if they are prompted from the same assumptions or grounded on the same weak evidence. A verifier cannot rescue a bad retrieval layer with pure eloquence.

That means you still need:

- strong evidence selection

- external policy checks

- deterministic validation where possible

- replayable traces for disagreements

Verification is not magic. It is one control in a larger system.

If your evidence layer is messy, fix that too. /posts/knowledge-freshness-for-ai-agents is the right companion problem.

A practical example: contract review

Imagine a contract agent processing inbound vendor agreements. A smaller model extracts clauses, identifies unusual terms, and drafts a summary. That is fast and cheap. A stronger verifier reviews only contracts with red flags: missing indemnity language, nonstandard liability caps, or vague data-handling terms.

The verifier does not rewrite every summary from scratch. It checks whether the flagged issues are real, whether the draft missed anything material, and whether the case can proceed or should be escalated to legal.

This setup saves cost on routine agreements while concentrating premium reasoning where it matters. That is the pattern at its best.

Measure the architecture, not just the models

When teams evaluate this pattern, they often compare model A versus model B and miss the more important question: does the combined system outperform a single-model baseline?

Track:

- cost per accepted outcome

- verifier invocation rate

- escalation rate

- false approvals

- false rejections

- latency by workflow tier

Those metrics tell you whether the architecture is genuinely improving the system or just moving complexity around.

Summary

The small-model, large-verifier pattern works because it assigns intelligence economically. Smaller models handle the high-volume routine work. Stronger models intervene where judgment matters. Done well, this improves throughput, reduces cost, and preserves quality where the business actually feels mistakes.

The future of agent design is not one giant model doing everything. It is systems that know when extra judgment is worth paying for.

Related Tools

Useful tools for this topic

If you want to turn this article into a concrete next step, start with one of these.

Complexity Estimator

PlanningEstimate how much build and operational complexity a proposed AI system is likely to create.

Open toolROI Calculator

PlanningEstimate time savings, cost impact, and likely business value before committing to a build.

Open toolPattern Selector

ArchitectureChoose between patterns like RAG assistant, workflow agent, approval-gated agent, or multi-agent setup.

Open toolSubscribe to AgentForge Hub

Get weekly insights, tutorials, and the latest AI agent developments delivered to your inbox.

No spam, ever. Unsubscribe at any time.