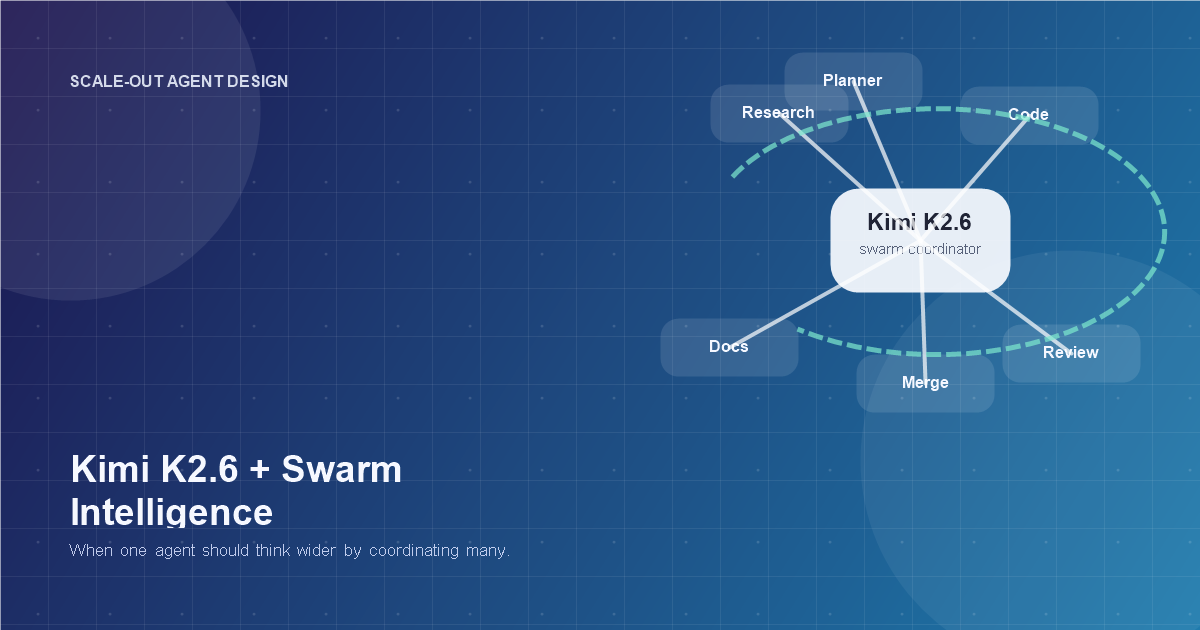

Kimi K2.6 and Swarm Intelligence: When Scale-Out Agents Start to Matter

Kimi K2.6 and Swarm Intelligence: When Scale-Out Agents Start to Matter

Every few months, the AI world rediscovers a very old idea with a new logo.

This time the idea is swarm intelligence.

On April 20, 2026, Moonshot AI launched Kimi K2.6, positioning it as a stronger open model for long-horizon coding, coding-driven design, proactive agents, and, notably, agent swarm capabilities. That matters because swarm rhetoric has usually run ahead of actual product usefulness. The pitch sounded futuristic long before the operational patterns did.

K2.6 makes that conversation more interesting.

Not because "more agents" is automatically better. It is not. But because Moonshot is making a more specific argument: that some tasks should be solved by scaling out across many specialized agents instead of forcing one model to carry the whole workload in sequence. That is a real architectural claim, not just marketing wallpaper.

The useful question now is not, "Are swarms the future?" The useful question is, what kinds of work become materially better when an agent can decompose, parallelize, reconcile, and keep going without collapsing into context soup?

What Kimi K2.6 actually adds

According to Moonshot's April 20, 2026 K2.6 launch post, the model pushes in four directions at once:

- stronger long-horizon coding

- coding-driven UI and lightweight full-stack generation

- more capable proactive agents

- expanded swarm orchestration

Moonshot says K2.6 can scale to 300 sub-agents across 4,000 coordinated steps, up from the earlier Agent Swarm preview's 100 sub-agents and 1,500 tool calls/steps around the K2.5 era. The company also presents K2.6 as a native multimodal model, with a documented 256K context window on the API side and support for both thinking and non-thinking modes.

That combination matters more than any single benchmark number.

Swarm systems do not get more useful just because the base model is smart. They get more useful when the model can:

- split work cleanly

- keep specialists focused

- recover from failures

- merge partial outputs without producing a haunted mess

That last part is where many multi-agent demos go to die.

Swarm intelligence is not "many bots doing stuff"

The phrase sounds grand, but in practice swarm intelligence usually means something less mystical and more operational:

- a coordinator creates sub-agents

- the sub-agents work in parallel on decomposed tasks

- the system compares, merges, ranks, or reconciles their outputs

- the coordinator assigns follow-up work based on what came back

That is the software version.

The older biological version comes from ants, bees, birds, and other distributed systems where useful global behavior emerges from local rules. AI people love referencing this because it sounds elegant and inevitable. Sometimes it is. Sometimes it is just a fancy way to describe "we spawned thirty workers and now we have thirty places to debug."

Kimi's own Agent Swarm framing is unusually direct here. Moonshot explicitly argues that the bottleneck is not just model quality but the single-agent, sequential execution model itself. That is a stronger claim than "our model got better." It says there is a structural ceiling to how far one agent can push broad research, mass discovery, large document processing, and perspective-heavy analysis.

That claim is not crazy.

It is also not universal.

Where K2.6's swarm story is genuinely compelling

Swarm systems are strongest when three things are true at once:

- The task can be broken into semi-independent subtasks.

- The subtasks benefit from parallel execution.

- There is a meaningful synthesis step at the end.

K2.6 looks especially well-positioned for that shape of work.

Broad research and discovery

This is the cleanest swarm use case. If you need 100 companies surveyed, 80 papers categorized, or 50 competitors mapped, a single agent becomes a librarian with a migraine. A swarm can assign the first-pass search, extraction, filtering, and validation across multiple workers.

This is where Moonshot's "scale out, not just up" framing makes the most sense. The win is not only quality. It is time-to-completion.

Coding programs, not just coding tickets

K2.6's launch material leans heavily on long-horizon coding, and that matters for swarm intelligence because codebases naturally decompose:

- one agent can inspect architecture

- one can test integration points

- one can trace dependency breakage

- one can implement the patch

- one can validate the output

That does not mean every bug deserves a swarm. Most do not. But end-to-end engineering tasks are one of the few domains where specialization and reconciliation can produce real gains instead of theatrical parallelism.

Multi-format deliverables

Moonshot also pushes a "documents, websites, slides, spreadsheets" output story. That is exactly the kind of workload where swarms can feel less like overengineering and more like an actual team:

- a researcher gathers material

- a writer shapes the narrative

- a designer handles presentation structure

- a checker validates consistency

That is a much better swarm pitch than "twenty agents brainstorm at each other until the tokens run out."

What K2.6 gets right about the limit of single agents

Moonshot's February 9, 2026 Agent Swarm post made a point that deserves more attention than the hype usually gets: single-agent systems degrade structurally on long, branching tasks.

That is true even when the underlying model is strong.

As a task expands, one agent has to:

- remember more history

- manage more branches

- revisit more evidence

- juggle more tools

- compress more context

Eventually, the system starts summarizing its own past to stay alive. That compression is often lossy. Information gets blurred. Alternatives disappear. The agent becomes less of a reasoner and more of a stressed middle manager writing notes to itself.

Swarm architectures attack that problem by distributing memory pressure and work specialization across multiple active threads. In other words, instead of asking one excellent worker to be strategist, analyst, implementer, reviewer, and archivist, the system lets different workers own different slices.

That is not magic. It is org design.

The part people will oversell

Now the necessary cold shower.

K2.6 does not prove that most teams should rush into swarm architecture.

In fact, most teams still do not need multi-agent systems as their default. They need:

- cleaner task boundaries

- better tool contracts

- stronger permissions

- more reliable evals

- fewer workflow fantasies

A mediocre architecture does not become mature because you replaced one confused agent with fifty enthusiastic ones.

Swarm systems add:

- more scheduling complexity

- more reconciliation logic

- more logging requirements

- more failure modes

- more cost surface area

The trap is obvious. A team sees a compelling demo, notices that K2.6 can coordinate hundreds of sub-agents, and concludes that quantity is now strategy. Usually it is not. Usually the question is still whether the task has enough parallel structure to justify the coordination tax.

If you want that skeptical case in full, pair this article with /posts/most-teams-do-not-need-multi-agent-systems-yet.

Where swarm intelligence actually earns the complexity

My rule is simple: a swarm earns its keep when it improves one of these three things hard enough to justify orchestration overhead.

1. Throughput

If ten sub-agents can finish in ten minutes what one agent would finish in two hours, the argument is obvious.

2. Coverage

If multiple agents can search more broadly, inspect more variants, or challenge each other's blind spots, the swarm may improve the completeness of the work.

3. Perspective diversity

This is the interesting one. Some problems get better when disagreement is built into the system:

- investment research

- product critique

- policy analysis

- literature synthesis

- risk review

Moonshot's older Agent Swarm examples leaned into this with perspective-heavy work, and that instinct is right. A swarm can create structured disagreement in a way a single agent often cannot.

That is one of the few places where "swarm intelligence" deserves the dramatic name.

K2.6 also hints at a bigger trend: agents becoming organizations

The most interesting part of K2.6 is not the benchmark table. It is the product direction hiding underneath the release.

Moonshot is moving from "agent as assistant" toward agent as organization:

- proactive background work

- persistent execution

- parallel specialists

- task reassignment on failure

- heterogeneous collaborators

The "Bring Your Own Agents" and Claw Groups direction makes that even clearer. The model is not only being positioned as a worker. It is being positioned as a coordinator for mixed teams of humans and agents with different tools, memories, and runtime contexts.

That is a meaningful shift.

It suggests the next competitive frontier is not just model IQ. It is organizational intelligence:

- Can the system form the right team?

- Can it assign the right work?

- Can it recover when one part fails?

- Can it merge outputs without flattening quality?

That is much closer to operating a company than chatting with a bot.

What builders should do with this right now

If you are evaluating K2.6 seriously, do not start by asking whether it is "better at swarm intelligence." That phrase is too vague to be useful.

Ask narrower questions:

- Which workflows in my stack are embarrassingly parallel?

- Where does one agent currently lose coverage or context?

- Where would independent sub-agents catch more issues than one sequential agent?

- What reconciliation step would decide whether the swarm helped or just generated noise?

Then test on workflows that naturally parallelize:

- large-scale research

- codebase inspection

- document set synthesis

- multi-path planning

- structured critique from multiple viewpoints

Do not start with tightly coupled transactional work. That is the fastest way to invent a coordination problem and then blame the model for it.

The practical takeaway

Kimi K2.6 is interesting because it makes a stronger case for scale-out agent design than most model releases do. The April 20, 2026 launch is not just another "our benchmark bars are taller" announcement. It is a signal that at least one major model provider thinks the next layer of improvement comes from better orchestration, longer-running autonomy, and more capable specialist coordination.

That does not mean swarms are suddenly the right answer for every team.

It does mean the question has matured.

Swarm intelligence is no longer just a research toy or conference-stage metaphor. With K2.6, it is becoming a practical systems question:

When should one very capable agent think longer, and when should a coordinated group of agents think wider?

That is a much better question than "How many agents can we spawn?" and finally, in 2026, it is one worth taking seriously.

Related Tools

Useful tools for this topic

If you want to turn this article into a concrete next step, start with one of these.

Architecture Recommender

ArchitectureGet a recommended starting architecture based on autonomy, data shape, action model, and team profile.

Open toolSolution Type Quiz

PlanningDecide whether your use case is better served by automation, a chatbot, RAG, a copilot, or a more capable agent.

Open toolComplexity Estimator

PlanningEstimate how much build and operational complexity a proposed AI system is likely to create.

Open toolSubscribe to AgentForge Hub

Get weekly insights, tutorials, and the latest AI agent developments delivered to your inbox.

No spam, ever. Unsubscribe at any time.